Sacre BLEU Score¶

Module Interface¶

- class torchmetrics.text.SacreBLEUScore(n_gram=4, smooth=False, tokenize='13a', lowercase=False, weights=None, **kwargs)[source]¶

Calculate BLEU score of machine translated text with one or more references.

This implementation follows the behaviour of SacreBLEU. The SacreBLEU implementation differs from the NLTK BLEU implementation in tokenization techniques.

As input to

forwardandupdatethe metric accepts the following input:preds(Sequence): An iterable of machine translated corpustarget(Sequence): An iterable of iterables of reference corpus

As output of

forwardandcomputethe metric returns the following output:sacre_bleu(Tensor): A tensor with the SacreBLEU Score

- Parameters:

tokenize¶ (

Literal['none','13a','zh','intl','char','ja-mecab','ko-mecab','flores101','flores200']) – Tokenization technique to be used. Choose between'none','13a','zh','intl','char','ja-mecab','ko-mecab','flores101'and'flores200'.lowercase¶ (

bool) – IfTrue, BLEU score over lowercased text is calculated.kwargs¶ (

Any) – Additional keyword arguments, see Advanced metric settings for more info.weights¶ (

Optional[Sequence[float]]) – Weights used for unigrams, bigrams, etc. to calculate BLEU score. If not provided, uniform weights are used.

- Raises:

ValueError – If

tokenizenot one of ‘none’, ‘13a’, ‘zh’, ‘intl’ or ‘char’ValueError – If

tokenizeis set to ‘intl’ and regex is not installedValueError – If a length of a list of weights is not

Noneand not equal ton_gram.

Example

>>> from torchmetrics.text import SacreBLEUScore >>> preds = ['the cat is on the mat'] >>> target = [['there is a cat on the mat', 'a cat is on the mat']] >>> sacre_bleu = SacreBLEUScore() >>> sacre_bleu(preds, target) tensor(0.7598)

Additional References:

Automatic Evaluation of Machine Translation Quality Using Longest Common Subsequence and Skip-Bigram Statistics by Chin-Yew Lin and Franz Josef Och Machine Translation Evolution

- plot(val=None, ax=None)[source]¶

Plot a single or multiple values from the metric.

- Parameters:

val¶ (

Union[Tensor,Sequence[Tensor],None]) – Either a single result from calling metric.forward or metric.compute or a list of these results. If no value is provided, will automatically call metric.compute and plot that result.ax¶ (

Optional[Axes]) – An matplotlib axis object. If provided will add plot to that axis

- Return type:

- Returns:

Figure and Axes object

- Raises:

ModuleNotFoundError – If matplotlib is not installed

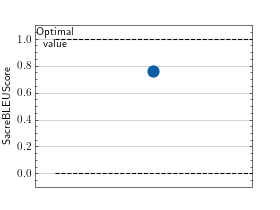

>>> # Example plotting a single value >>> from torchmetrics.text import SacreBLEUScore >>> metric = SacreBLEUScore() >>> preds = ['the cat is on the mat'] >>> target = [['there is a cat on the mat', 'a cat is on the mat']] >>> metric.update(preds, target) >>> fig_, ax_ = metric.plot()

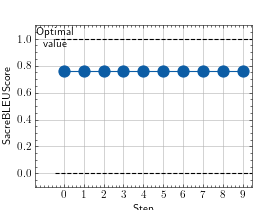

>>> # Example plotting multiple values >>> from torchmetrics.text import SacreBLEUScore >>> metric = SacreBLEUScore() >>> preds = ['the cat is on the mat'] >>> target = [['there is a cat on the mat', 'a cat is on the mat']] >>> values = [ ] >>> for _ in range(10): ... values.append(metric(preds, target)) >>> fig_, ax_ = metric.plot(values)

Functional Interface¶

- torchmetrics.functional.text.sacre_bleu_score(preds, target, n_gram=4, smooth=False, tokenize='13a', lowercase=False, weights=None)[source]¶

Calculate BLEU score [1] of machine translated text with one or more references.

This implementation follows the behaviour of SacreBLEU [2] implementation from https://github.com/mjpost/sacrebleu.

- Parameters:

preds¶ (

Sequence[str]) – An iterable of machine translated corpustarget¶ (

Sequence[Sequence[str]]) – An iterable of iterables of reference corpustokenize¶ (

Literal['none','13a','zh','intl','char','ja-mecab','ko-mecab','flores101','flores200']) – Tokenization technique to be used. Choose between'none','13a','zh','intl','char','ja-mecab','ko-mecab','flores101'and'flores200'.lowercase¶ (

bool) – IfTrue, BLEU score over lowercased text is calculated.weights¶ (

Optional[Sequence[float]]) – Weights used for unigrams, bigrams, etc. to calculate BLEU score. If not provided, uniform weights are used.

- Return type:

- Returns:

Tensor with BLEU Score

- Raises:

ValueError – If

predsandtargetcorpus have different lengths.ValueError – If a length of a list of weights is not

Noneand not equal ton_gram.

Example

>>> from torchmetrics.functional.text import sacre_bleu_score >>> preds = ['the cat is on the mat'] >>> target = [['there is a cat on the mat', 'a cat is on the mat']] >>> sacre_bleu_score(preds, target) tensor(0.7598)

References

[1] BLEU: a Method for Automatic Evaluation of Machine Translation by Papineni, Kishore, Salim Roukos, Todd Ward, and Wei-Jing Zhu BLEU

[2] A Call for Clarity in Reporting BLEU Scores by Matt Post.

[3] Automatic Evaluation of Machine Translation Quality Using Longest Common Subsequence and Skip-Bigram Statistics by Chin-Yew Lin and Franz Josef Och Machine Translation Evolution