KL Divergence¶

Module Interface¶

- class torchmetrics.KLDivergence(log_prob=False, reduction='mean', **kwargs)[source]

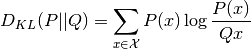

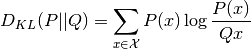

Computes the KL divergence:

Where

and

and  are probability distributions where

are probability distributions where  usually represents a distribution

over data and

usually represents a distribution

over data and  is often a prior or approximation of

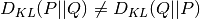

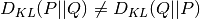

is often a prior or approximation of  . It should be noted that the KL divergence

is a non-symetrical metric i.e.

. It should be noted that the KL divergence

is a non-symetrical metric i.e.  .

.- Parameters

p¶ – data distribution with shape

[N, d]q¶ – prior or approximate distribution with shape

[N, d]log_prob¶ (

bool) – bool indicating if input is log-probabilities or probabilities. If given as probabilities, will normalize to make sure the distributes sum to 1.reduction¶ (

Literal[‘mean’, ‘sum’, ‘none’, None]) –Determines how to reduce over the

N/batch dimension:'mean'[default]: Averages score across samples'sum': Sum score across samples'none'orNone: Returns score per sample

kwargs¶ (

Any) – Additional keyword arguments, see Advanced metric settings for more info.

- Raises

TypeError – If

log_probis not anbool.ValueError – If

reductionis not one of'mean','sum','none'orNone.

Note

Half precision is only support on GPU for this metric

Example

>>> import torch >>> from torchmetrics.functional import kl_divergence >>> p = torch.tensor([[0.36, 0.48, 0.16]]) >>> q = torch.tensor([[1/3, 1/3, 1/3]]) >>> kl_divergence(p, q) tensor(0.0853)

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- compute()[source]

Override this method to compute the final metric value from state variables synchronized across the distributed backend.

- Return type

Functional Interface¶

- torchmetrics.functional.kl_divergence(p, q, log_prob=False, reduction='mean')[source]

Computes KL divergence

Where

and

and  are probability distributions where

are probability distributions where  usually represents a distribution

over data and

usually represents a distribution

over data and  is often a prior or approximation of

is often a prior or approximation of  . It should be noted that the KL divergence

is a non-symetrical metric i.e.

. It should be noted that the KL divergence

is a non-symetrical metric i.e.  .

.- Parameters

q¶ (

Tensor) – prior or approximate distribution with shape[N, d]log_prob¶ (

bool) – bool indicating if input is log-probabilities or probabilities. If given as probabilities, will normalize to make sure the distributes sum to 1reduction¶ (

Literal[‘mean’, ‘sum’, ‘none’, None]) –Determines how to reduce over the

N/batch dimension:'mean'[default]: Averages score across samples'sum': Sum score across samples'none'orNone: Returns score per sample

Example

>>> import torch >>> p = torch.tensor([[0.36, 0.48, 0.16]]) >>> q = torch.tensor([[1/3, 1/3, 1/3]]) >>> kl_divergence(p, q) tensor(0.0853)

- Return type