Hamming Distance¶

Module Interface¶

HammingDistance¶

- class torchmetrics.HammingDistance(threshold=0.5, **kwargs)[source]

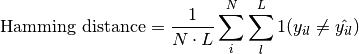

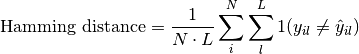

Hamming distance.

Note

From v0.10 an

'binary_*','multiclass_*','multilabel_*'version now exist of each classification metric. Moving forward we recommend using these versions. This base metric will still work as it did prior to v0.10 until v0.11. From v0.11 the task argument introduced in this metric will be required and the general order of arguments may change, such that this metric will just function as an single entrypoint to calling the three specialized versions.Computes the average Hamming distance (also known as Hamming loss) between targets and predictions:

Where

is a tensor of target values,

is a tensor of target values,  is a tensor of predictions,

and

is a tensor of predictions,

and  refers to the

refers to the  -th label of the

-th label of the  -th sample of that

tensor.

-th sample of that

tensor.This is the same as

1-accuracyfor binary data, while for all other types of inputs it treats each possible label separately - meaning that, for example, multi-class data is treated as if it were multi-label.Accepts all input types listed in Input types.

- Parameters

threshold¶ (

float) – Threshold for transforming probability or logit predictions to binary(0,1)predictions, in the case of binary or multi-label inputs. Default value of0.5corresponds to input being probabilities.kwargs¶ (

Any) – Additional keyword arguments, see Advanced metric settings for more info.

- Raises

ValueError – If

thresholdis not between0and1.

Example

>>> from torchmetrics import HammingDistance >>> target = torch.tensor([[0, 1], [1, 1]]) >>> preds = torch.tensor([[0, 1], [0, 1]]) >>> hamming_distance = HammingDistance() >>> hamming_distance(preds, target) tensor(0.2500)

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- compute()[source]

Computes hamming distance based on inputs passed in to

updatepreviously.- Return type

BinaryHammingDistance¶

- class torchmetrics.classification.BinaryHammingDistance(threshold=0.5, multidim_average='global', ignore_index=None, validate_args=True, **kwargs)[source]

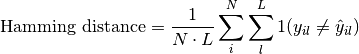

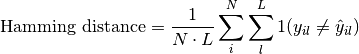

Computes the average Hamming distance (also known as Hamming loss) for binary tasks:

Where

is a tensor of target values,

is a tensor of target values,  is a tensor of predictions,

and

is a tensor of predictions,

and  refers to the

refers to the  -th label of the

-th label of the  -th sample of that

tensor.

-th sample of that

tensor.Accepts the following input tensors:

preds(int or float tensor):(N, ...). If preds is a floating point tensor with values outside [0,1] range we consider the input to be logits and will auto apply sigmoid per element. Addtionally, we convert to int tensor with thresholding using the value inthreshold.target(int tensor):(N, ...)

The influence of the additional dimension

...(if present) will be determined by the multidim_average argument.- Parameters

threshold¶ (

float) – Threshold for transforming probability to binary {0,1} predictionsmultidim_average¶ (

Literal[‘global’, ‘samplewise’]) –Defines how additionally dimensions

...should be handled. Should be one of the following:global: Additional dimensions are flatted along the batch dimensionsamplewise: Statistic will be calculated independently for each sample on theNaxis. The statistics in this case are calculated over the additional dimensions.

ignore_index¶ (

Optional[int]) – Specifies a target value that is ignored and does not contribute to the metric calculationvalidate_args¶ (

bool) – bool indicating if input arguments and tensors should be validated for correctness. Set toFalsefor faster computations.

- Returns

If

multidim_averageis set toglobal, the metric returns a scalar value. Ifmultidim_averageis set tosamplewise, the metric returns(N,)vector consisting of a scalar value per sample.

- Example (preds is int tensor):

>>> from torchmetrics.classification import BinaryHammingDistance >>> target = torch.tensor([0, 1, 0, 1, 0, 1]) >>> preds = torch.tensor([0, 0, 1, 1, 0, 1]) >>> metric = BinaryHammingDistance() >>> metric(preds, target) tensor(0.3333)

- Example (preds is float tensor):

>>> from torchmetrics.classification import BinaryHammingDistance >>> target = torch.tensor([0, 1, 0, 1, 0, 1]) >>> preds = torch.tensor([0.11, 0.22, 0.84, 0.73, 0.33, 0.92]) >>> metric = BinaryHammingDistance() >>> metric(preds, target) tensor(0.3333)

- Example (multidim tensors):

>>> from torchmetrics.classification import BinaryHammingDistance >>> target = torch.tensor([[[0, 1], [1, 0], [0, 1]], [[1, 1], [0, 0], [1, 0]]]) >>> preds = torch.tensor( ... [ ... [[0.59, 0.91], [0.91, 0.99], [0.63, 0.04]], ... [[0.38, 0.04], [0.86, 0.780], [0.45, 0.37]], ... ] ... ) >>> metric = BinaryHammingDistance(multidim_average='samplewise') >>> metric(preds, target) tensor([0.6667, 0.8333])

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- compute()[source]

Computes the final statistics.

- Return type

- Returns

The metric returns a tensor of shape

(..., 5), where the last dimension corresponds to[tp, fp, tn, fn, sup](supstands for support and equalstp + fn). The shape depends on themultidim_averageparameter:If

multidim_averageis set toglobal, the shape will be(5,)If

multidim_averageis set tosamplewise, the shape will be(N, 5)

MulticlassHammingDistance¶

- class torchmetrics.classification.MulticlassHammingDistance(num_classes, top_k=1, average='macro', multidim_average='global', ignore_index=None, validate_args=True, **kwargs)[source]

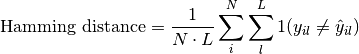

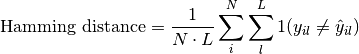

Computes the average Hamming distance (also known as Hamming loss) for multiclass tasks:

Where

is a tensor of target values,

is a tensor of target values,  is a tensor of predictions,

and

is a tensor of predictions,

and  refers to the

refers to the  -th label of the

-th label of the  -th sample of that

tensor.

-th sample of that

tensor.Accepts the following input tensors:

preds:(N, ...)(int tensor) or(N, C, ..)(float tensor). If preds is a floating point we applytorch.argmaxalong theCdimension to automatically convert probabilities/logits into an int tensor.target(int tensor):(N, ...)

The influence of the additional dimension

...(if present) will be determined by the multidim_average argument.- Parameters

num_classes¶ (

int) – Integer specifing the number of classesaverage¶ (

Optional[Literal[‘micro’, ‘macro’, ‘weighted’, ‘none’]]) –Defines the reduction that is applied over labels. Should be one of the following:

micro: Sum statistics over all labelsmacro: Calculate statistics for each label and average themweighted: Calculates statistics for each label and computes weighted average using their support"none"orNone: Calculates statistic for each label and applies no reduction

top_k¶ (

int) – Number of highest probability or logit score predictions considered to find the correct label. Only works whenpredscontain probabilities/logits.multidim_average¶ (

Literal[‘global’, ‘samplewise’]) –Defines how additionally dimensions

...should be handled. Should be one of the following:global: Additional dimensions are flatted along the batch dimensionsamplewise: Statistic will be calculated independently for each sample on theNaxis. The statistics in this case are calculated over the additional dimensions.

ignore_index¶ (

Optional[int]) – Specifies a target value that is ignored and does not contribute to the metric calculationvalidate_args¶ (

bool) – bool indicating if input arguments and tensors should be validated for correctness. Set toFalsefor faster computations.

- Returns

If

multidim_averageis set toglobal:If

average='micro'/'macro'/'weighted', the output will be a scalar tensorIf

average=None/'none', the shape will be(C,)

If

multidim_averageis set tosamplewise:If

average='micro'/'macro'/'weighted', the shape will be(N,)If

average=None/'none', the shape will be(N, C)

- Return type

The returned shape depends on the

averageandmultidim_averagearguments

- Example (preds is int tensor):

>>> from torchmetrics.classification import MulticlassHammingDistance >>> target = torch.tensor([2, 1, 0, 0]) >>> preds = torch.tensor([2, 1, 0, 1]) >>> metric = MulticlassHammingDistance(num_classes=3) >>> metric(preds, target) tensor(0.1667) >>> metric = MulticlassHammingDistance(num_classes=3, average=None) >>> metric(preds, target) tensor([0.5000, 0.0000, 0.0000])

- Example (preds is float tensor):

>>> from torchmetrics.classification import MulticlassHammingDistance >>> target = torch.tensor([2, 1, 0, 0]) >>> preds = torch.tensor([ ... [0.16, 0.26, 0.58], ... [0.22, 0.61, 0.17], ... [0.71, 0.09, 0.20], ... [0.05, 0.82, 0.13], ... ]) >>> metric = MulticlassHammingDistance(num_classes=3) >>> metric(preds, target) tensor(0.1667) >>> metric = MulticlassHammingDistance(num_classes=3, average=None) >>> metric(preds, target) tensor([0.5000, 0.0000, 0.0000])

- Example (multidim tensors):

>>> from torchmetrics.classification import MulticlassHammingDistance >>> target = torch.tensor([[[0, 1], [2, 1], [0, 2]], [[1, 1], [2, 0], [1, 2]]]) >>> preds = torch.tensor([[[0, 2], [2, 0], [0, 1]], [[2, 2], [2, 1], [1, 0]]]) >>> metric = MulticlassHammingDistance(num_classes=3, multidim_average='samplewise') >>> metric(preds, target) tensor([0.5000, 0.7222]) >>> metric = MulticlassHammingDistance(num_classes=3, multidim_average='samplewise', average=None) >>> metric(preds, target) tensor([[0.0000, 1.0000, 0.5000], [1.0000, 0.6667, 0.5000]])

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- compute()[source]

Computes the final statistics.

- Return type

- Returns

The metric returns a tensor of shape

(..., 5), where the last dimension corresponds to[tp, fp, tn, fn, sup](supstands for support and equalstp + fn). The shape depends onaverageandmultidim_averageparameters:If

multidim_averageis set toglobalIf

average='micro'/'macro'/'weighted', the shape will be(5,)If

average=None/'none', the shape will be(C, 5)If

multidim_averageis set tosamplewiseIf

average='micro'/'macro'/'weighted', the shape will be(N, 5)If

average=None/'none', the shape will be(N, C, 5)

MultilabelHammingDistance¶

- class torchmetrics.classification.MultilabelHammingDistance(num_labels, threshold=0.5, average='macro', multidim_average='global', ignore_index=None, validate_args=True, **kwargs)[source]

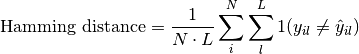

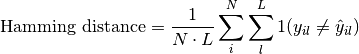

Computes the average Hamming distance (also known as Hamming loss) for multilabel tasks:

Where

is a tensor of target values,

is a tensor of target values,  is a tensor of predictions,

and

is a tensor of predictions,

and  refers to the

refers to the  -th label of the

-th label of the  -th sample of that

tensor.

-th sample of that

tensor.Accepts the following input tensors:

preds(int or float tensor):(N, C, ...). If preds is a floating point tensor with values outside [0,1] range we consider the input to be logits and will auto apply sigmoid per element. Addtionally, we convert to int tensor with thresholding using the value inthreshold.target(int tensor):(N, C, ...)

The influence of the additional dimension

...(if present) will be determined by the multidim_average argument.- Parameters

threshold¶ (

float) – Threshold for transforming probability to binary (0,1) predictionsaverage¶ (

Optional[Literal[‘micro’, ‘macro’, ‘weighted’, ‘none’]]) –Defines the reduction that is applied over labels. Should be one of the following:

micro: Sum statistics over all labelsmacro: Calculate statistics for each label and average themweighted: Calculates statistics for each label and computes weighted average using their support"none"orNone: Calculates statistic for each label and applies no reduction

multidim_average¶ (

Literal[‘global’, ‘samplewise’]) –Defines how additionally dimensions

...should be handled. Should be one of the following:global: Additional dimensions are flatted along the batch dimensionsamplewise: Statistic will be calculated independently for each sample on theNaxis. The statistics in this case are calculated over the additional dimensions.

ignore_index¶ (

Optional[int]) – Specifies a target value that is ignored and does not contribute to the metric calculationvalidate_args¶ (

bool) – bool indicating if input arguments and tensors should be validated for correctness. Set toFalsefor faster computations.

- Returns

If

multidim_averageis set toglobal:If

average='micro'/'macro'/'weighted', the output will be a scalar tensorIf

average=None/'none', the shape will be(C,)

If

multidim_averageis set tosamplewise:If

average='micro'/'macro'/'weighted', the shape will be(N,)If

average=None/'none', the shape will be(N, C)

- Return type

The returned shape depends on the

averageandmultidim_averagearguments

- Example (preds is int tensor):

>>> from torchmetrics.classification import MultilabelHammingDistance >>> target = torch.tensor([[0, 1, 0], [1, 0, 1]]) >>> preds = torch.tensor([[0, 0, 1], [1, 0, 1]]) >>> metric = MultilabelHammingDistance(num_labels=3) >>> metric(preds, target) tensor(0.3333) >>> metric = MultilabelHammingDistance(num_labels=3, average=None) >>> metric(preds, target) tensor([0.0000, 0.5000, 0.5000])

- Example (preds is float tensor):

>>> from torchmetrics.classification import MultilabelHammingDistance >>> target = torch.tensor([[0, 1, 0], [1, 0, 1]]) >>> preds = torch.tensor([[0.11, 0.22, 0.84], [0.73, 0.33, 0.92]]) >>> metric = MultilabelHammingDistance(num_labels=3) >>> metric(preds, target) tensor(0.3333) >>> metric = MultilabelHammingDistance(num_labels=3, average=None) >>> metric(preds, target) tensor([0.0000, 0.5000, 0.5000])

- Example (multidim tensors):

>>> from torchmetrics.classification import MultilabelHammingDistance >>> target = torch.tensor([[[0, 1], [1, 0], [0, 1]], [[1, 1], [0, 0], [1, 0]]]) >>> preds = torch.tensor( ... [ ... [[0.59, 0.91], [0.91, 0.99], [0.63, 0.04]], ... [[0.38, 0.04], [0.86, 0.780], [0.45, 0.37]], ... ] ... ) >>> metric = MultilabelHammingDistance(num_labels=3, multidim_average='samplewise') >>> metric(preds, target) tensor([0.6667, 0.8333]) >>> metric = MultilabelHammingDistance(num_labels=3, multidim_average='samplewise', average=None) >>> metric(preds, target) tensor([[0.5000, 0.5000, 1.0000], [1.0000, 1.0000, 0.5000]])

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- compute()[source]

Computes the final statistics.

- Return type

- Returns

The metric returns a tensor of shape

(..., 5), where the last dimension corresponds to[tp, fp, tn, fn, sup](supstands for support and equalstp + fn). The shape depends onaverageandmultidim_averageparameters:If

multidim_averageis set toglobalIf

average='micro'/'macro'/'weighted', the shape will be(5,)If

average=None/'none', the shape will be(C, 5)If

multidim_averageis set tosamplewiseIf

average='micro'/'macro'/'weighted', the shape will be(N, 5)If

average=None/'none', the shape will be(N, C, 5)

Functional Interface¶

hamming_distance¶

- torchmetrics.functional.hamming_distance(preds, target, threshold=0.5, task=None, num_classes=None, num_labels=None, average='macro', top_k=1, multidim_average='global', ignore_index=None, validate_args=True)[source]

Hamming distance.

Note

From v0.10 an

'binary_*','multiclass_*','multilabel_*'version now exist of each classification metric. Moving forward we recommend using these versions. This base metric will still work as it did prior to v0.10 until v0.11. From v0.11 the task argument introduced in this metric will be required and the general order of arguments may change, such that this metric will just function as an single entrypoint to calling the three specialized versions.Computes the average Hamming distance (also known as Hamming loss) between targets and predictions:

Where

is a tensor of target values,

is a tensor of target values,  is a tensor of predictions,

and

is a tensor of predictions,

and  refers to the

refers to the  -th label of the

-th label of the  -th sample of that

tensor.

-th sample of that

tensor.This is the same as

1-accuracyfor binary data, while for all other types of inputs it treats each possible label separately - meaning that, for example, multi-class data is treated as if it were multi-label.Accepts all input types listed in Input types.

- Parameters

Example

>>> from torchmetrics.functional import hamming_distance >>> target = torch.tensor([[0, 1], [1, 1]]) >>> preds = torch.tensor([[0, 1], [0, 1]]) >>> hamming_distance(preds, target) tensor(0.2500)

- Return type

binary_hamming_distance¶

- torchmetrics.functional.classification.binary_hamming_distance(preds, target, threshold=0.5, multidim_average='global', ignore_index=None, validate_args=True)[source]

Computes the average Hamming distance (also known as Hamming loss) for binary tasks:

Where

is a tensor of target values,

is a tensor of target values,  is a tensor of predictions,

and

is a tensor of predictions,

and  refers to the

refers to the  -th label of the

-th label of the  -th sample of that

tensor.

-th sample of that

tensor.Accepts the following input tensors:

preds(int or float tensor):(N, ...). If preds is a floating point tensor with values outside [0,1] range we consider the input to be logits and will auto apply sigmoid per element. Addtionally, we convert to int tensor with thresholding using the value inthreshold.target(int tensor):(N, ...)

The influence of the additional dimension

...(if present) will be determined by the multidim_average argument.- Parameters

threshold¶ (

float) – Threshold for transforming probability to binary {0,1} predictionsmultidim_average¶ (

Literal[‘global’, ‘samplewise’]) –Defines how additionally dimensions

...should be handled. Should be one of the following:global: Additional dimensions are flatted along the batch dimensionsamplewise: Statistic will be calculated independently for each sample on theNaxis. The statistics in this case are calculated over the additional dimensions.

ignore_index¶ (

Optional[int]) – Specifies a target value that is ignored and does not contribute to the metric calculationvalidate_args¶ (

bool) – bool indicating if input arguments and tensors should be validated for correctness. Set toFalsefor faster computations.

- Return type

- Returns

If

multidim_averageis set toglobal, the metric returns a scalar value. Ifmultidim_averageis set tosamplewise, the metric returns(N,)vector consisting of a scalar value per sample.

- Example (preds is int tensor):

>>> from torchmetrics.functional.classification import binary_hamming_distance >>> target = torch.tensor([0, 1, 0, 1, 0, 1]) >>> preds = torch.tensor([0, 0, 1, 1, 0, 1]) >>> binary_hamming_distance(preds, target) tensor(0.3333)

- Example (preds is float tensor):

>>> from torchmetrics.functional.classification import binary_hamming_distance >>> target = torch.tensor([0, 1, 0, 1, 0, 1]) >>> preds = torch.tensor([0.11, 0.22, 0.84, 0.73, 0.33, 0.92]) >>> binary_hamming_distance(preds, target) tensor(0.3333)

- Example (multidim tensors):

>>> from torchmetrics.functional.classification import binary_hamming_distance >>> target = torch.tensor([[[0, 1], [1, 0], [0, 1]], [[1, 1], [0, 0], [1, 0]]]) >>> preds = torch.tensor( ... [ ... [[0.59, 0.91], [0.91, 0.99], [0.63, 0.04]], ... [[0.38, 0.04], [0.86, 0.780], [0.45, 0.37]], ... ] ... ) >>> binary_hamming_distance(preds, target, multidim_average='samplewise') tensor([0.6667, 0.8333])

multiclass_hamming_distance¶

- torchmetrics.functional.classification.multiclass_hamming_distance(preds, target, num_classes, average='macro', top_k=1, multidim_average='global', ignore_index=None, validate_args=True)[source]

Computes the average Hamming distance (also known as Hamming loss) for multiclass tasks:

Where

is a tensor of target values,

is a tensor of target values,  is a tensor of predictions,

and

is a tensor of predictions,

and  refers to the

refers to the  -th label of the

-th label of the  -th sample of that

tensor.

-th sample of that

tensor.Accepts the following input tensors:

preds:(N, ...)(int tensor) or(N, C, ..)(float tensor). If preds is a floating point we applytorch.argmaxalong theCdimension to automatically convert probabilities/logits into an int tensor.target(int tensor):(N, ...)

The influence of the additional dimension

...(if present) will be determined by the multidim_average argument.- Parameters

num_classes¶ (

int) – Integer specifing the number of classesaverage¶ (

Optional[Literal[‘micro’, ‘macro’, ‘weighted’, ‘none’]]) –Defines the reduction that is applied over labels. Should be one of the following:

micro: Sum statistics over all labelsmacro: Calculate statistics for each label and average themweighted: Calculates statistics for each label and computes weighted average using their support"none"orNone: Calculates statistic for each label and applies no reduction

top_k¶ (

int) – Number of highest probability or logit score predictions considered to find the correct label. Only works whenpredscontain probabilities/logits.multidim_average¶ (

Literal[‘global’, ‘samplewise’]) –Defines how additionally dimensions

...should be handled. Should be one of the following:global: Additional dimensions are flatted along the batch dimensionsamplewise: Statistic will be calculated independently for each sample on theNaxis. The statistics in this case are calculated over the additional dimensions.

ignore_index¶ (

Optional[int]) – Specifies a target value that is ignored and does not contribute to the metric calculationvalidate_args¶ (

bool) – bool indicating if input arguments and tensors should be validated for correctness. Set toFalsefor faster computations.

- Returns

If

multidim_averageis set toglobal:If

average='micro'/'macro'/'weighted', the output will be a scalar tensorIf

average=None/'none', the shape will be(C,)

If

multidim_averageis set tosamplewise:If

average='micro'/'macro'/'weighted', the shape will be(N,)If

average=None/'none', the shape will be(N, C)

- Return type

The returned shape depends on the

averageandmultidim_averagearguments

- Example (preds is int tensor):

>>> from torchmetrics.functional.classification import multiclass_hamming_distance >>> target = torch.tensor([2, 1, 0, 0]) >>> preds = torch.tensor([2, 1, 0, 1]) >>> multiclass_hamming_distance(preds, target, num_classes=3) tensor(0.1667) >>> multiclass_hamming_distance(preds, target, num_classes=3, average=None) tensor([0.5000, 0.0000, 0.0000])

- Example (preds is float tensor):

>>> from torchmetrics.functional.classification import multiclass_hamming_distance >>> target = torch.tensor([2, 1, 0, 0]) >>> preds = torch.tensor([ ... [0.16, 0.26, 0.58], ... [0.22, 0.61, 0.17], ... [0.71, 0.09, 0.20], ... [0.05, 0.82, 0.13], ... ]) >>> multiclass_hamming_distance(preds, target, num_classes=3) tensor(0.1667) >>> multiclass_hamming_distance(preds, target, num_classes=3, average=None) tensor([0.5000, 0.0000, 0.0000])

- Example (multidim tensors):

>>> from torchmetrics.functional.classification import multiclass_hamming_distance >>> target = torch.tensor([[[0, 1], [2, 1], [0, 2]], [[1, 1], [2, 0], [1, 2]]]) >>> preds = torch.tensor([[[0, 2], [2, 0], [0, 1]], [[2, 2], [2, 1], [1, 0]]]) >>> multiclass_hamming_distance(preds, target, num_classes=3, multidim_average='samplewise') tensor([0.5000, 0.7222]) >>> multiclass_hamming_distance(preds, target, num_classes=3, multidim_average='samplewise', average=None) tensor([[0.0000, 1.0000, 0.5000], [1.0000, 0.6667, 0.5000]])

multilabel_hamming_distance¶

- torchmetrics.functional.classification.multilabel_hamming_distance(preds, target, num_labels, threshold=0.5, average='macro', multidim_average='global', ignore_index=None, validate_args=True)[source]

Computes the average Hamming distance (also known as Hamming loss) for multilabel tasks:

Where

is a tensor of target values,

is a tensor of target values,  is a tensor of predictions,

and

is a tensor of predictions,

and  refers to the

refers to the  -th label of the

-th label of the  -th sample of that

tensor.

-th sample of that

tensor.Accepts the following input tensors:

preds(int or float tensor):(N, C, ...). If preds is a floating point tensor with values outside [0,1] range we consider the input to be logits and will auto apply sigmoid per element. Addtionally, we convert to int tensor with thresholding using the value inthreshold.target(int tensor):(N, C, ...)

The influence of the additional dimension

...(if present) will be determined by the multidim_average argument.- Parameters

threshold¶ (

float) – Threshold for transforming probability to binary (0,1) predictionsaverage¶ (

Optional[Literal[‘micro’, ‘macro’, ‘weighted’, ‘none’]]) –Defines the reduction that is applied over labels. Should be one of the following:

micro: Sum statistics over all labelsmacro: Calculate statistics for each label and average themweighted: Calculates statistics for each label and computes weighted average using their support"none"orNone: Calculates statistic for each label and applies no reduction

multidim_average¶ (

Literal[‘global’, ‘samplewise’]) –Defines how additionally dimensions

...should be handled. Should be one of the following:global: Additional dimensions are flatted along the batch dimensionsamplewise: Statistic will be calculated independently for each sample on theNaxis. The statistics in this case are calculated over the additional dimensions.

ignore_index¶ (

Optional[int]) – Specifies a target value that is ignored and does not contribute to the metric calculationvalidate_args¶ (

bool) – bool indicating if input arguments and tensors should be validated for correctness. Set toFalsefor faster computations.

- Returns

If

multidim_averageis set toglobal:If

average='micro'/'macro'/'weighted', the output will be a scalar tensorIf

average=None/'none', the shape will be(C,)

If

multidim_averageis set tosamplewise:If

average='micro'/'macro'/'weighted', the shape will be(N,)If

average=None/'none', the shape will be(N, C)

- Return type

The returned shape depends on the

averageandmultidim_averagearguments

- Example (preds is int tensor):

>>> from torchmetrics.functional.classification import multilabel_hamming_distance >>> target = torch.tensor([[0, 1, 0], [1, 0, 1]]) >>> preds = torch.tensor([[0, 0, 1], [1, 0, 1]]) >>> multilabel_hamming_distance(preds, target, num_labels=3) tensor(0.3333) >>> multilabel_hamming_distance(preds, target, num_labels=3, average=None) tensor([0.0000, 0.5000, 0.5000])

- Example (preds is float tensor):

>>> from torchmetrics.functional.classification import multilabel_hamming_distance >>> target = torch.tensor([[0, 1, 0], [1, 0, 1]]) >>> preds = torch.tensor([[0.11, 0.22, 0.84], [0.73, 0.33, 0.92]]) >>> multilabel_hamming_distance(preds, target, num_labels=3) tensor(0.3333) >>> multilabel_hamming_distance(preds, target, num_labels=3, average=None) tensor([0.0000, 0.5000, 0.5000])

- Example (multidim tensors):

>>> from torchmetrics.functional.classification import multilabel_hamming_distance >>> target = torch.tensor([[[0, 1], [1, 0], [0, 1]], [[1, 1], [0, 0], [1, 0]]]) >>> preds = torch.tensor( ... [ ... [[0.59, 0.91], [0.91, 0.99], [0.63, 0.04]], ... [[0.38, 0.04], [0.86, 0.780], [0.45, 0.37]], ... ] ... ) >>> multilabel_hamming_distance(preds, target, num_labels=3, multidim_average='samplewise') tensor([0.6667, 0.8333]) >>> multilabel_hamming_distance(preds, target, num_labels=3, multidim_average='samplewise', average=None) tensor([[0.5000, 0.5000, 1.0000], [1.0000, 1.0000, 0.5000]])